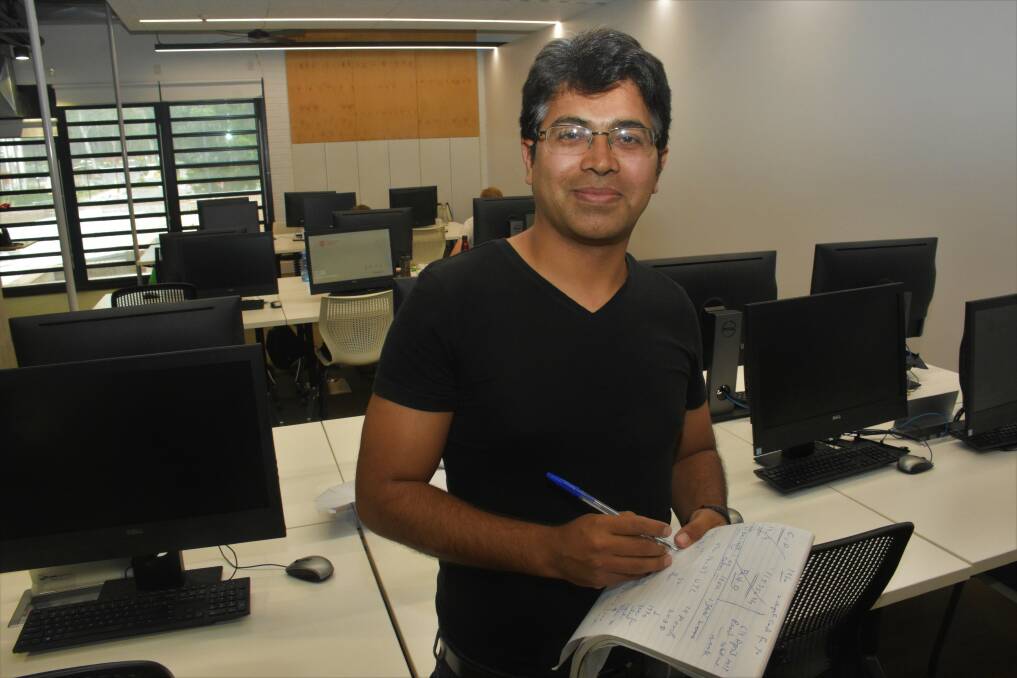

Twenty years ago, computing student Anwaar Ulhaq watched global news reports in awe as worldwide hysteria mounted over a predicted Y2K bug that could dismantle computing systems across the globe.

Subscribe now for unlimited access.

$0/

(min cost $0)

or signup to continue reading

The Millennium Bug was a widespread computer programming shortcut expected to cause chaos as systems attempted the switch from the year 1999 to 2000.

Dr Ulhaq is now a software engineering lecturer in the Charles Sturt University School of Computing and Mathematics. He vividly remembers the hysteria in the lead up to midnight on December 31, 1999.

"Programmers feared that computers would stop working," said Dr Ulhaq.

"Instead of allowing four digits for the year, many computer programs only allowed two digits."

This simple switch, but universally complicated problem, meant that rather than switching to the year 2000 at midnight, computers would revert back to 1900.

"This is a critical issue for timed systems such as automated systems in transport, communication and power plants. It could even interfere with banking," Dr Ulhaq explained.

"Fortunately the world realised it was a big issue before it happened. Some countries were well prepared such as the United States of America and Australia by designing a software patch converting two-digit code into four-digit code."

Remembering back 20 years, Dr Ulhaq said there were only minor issues but the bug became a crucial learning lesson.

"I think it's a successful example of risk management because scientists looked at the problem in time, made patches and we were ready for it," he said.

"I think the legacy of Y2K is about how to deal with risk. Risk management is now a part of every design from the beginning.

"There is a trade off between regulation and innovation but Y2K is telling us that they both need to be balanced rather than focusing on one. If we think too much on risk we won't be able to innovate but the opposite effect can also happen. Nowadays we try to have a balance between the two."

There is still no permanent solution to the technological limits shackled to modern computing systems, according to Dr Ulhaq.

"There is a similar bug called the 2038 bug, expected to occur in 2038, and this is about time calculations for 32 bit systems," he said.

"Most people have moved to 64 bit systems but the CPU of a 32 bit system has a limitation of not being able to calculate the time past about 2 billion seconds. The computer systems started calculations in 1970 but once we reach that limit it will reset to 1970 again.

"The 64 bit systems have an even larger time limitation but we could reach it eventually."

Dr Ulhaq said modern technology is evolving at an exponential rate and the world we see today will be drastically different in the future.

"If you told someone in 1999 they would have a small device which is more powerful than a computer, that they can touch to access, watch videos on and be connected to the entire world, they wouldn't believe you.

"But now we have seen the revolution and we are the generation of believers in the power of technology."

In the next 20 years desktop systems will become increasingly cloud based, artificial intelligence will begin to replace human work forces and FinTech (financial technology) such as digital currency will replace traditional third-party banking, according to Dr Ulhaq.

If we've learned anything from the past, that's to be prepared.

What else is making news?

While you're with us, you can now receive updates straight to your inbox from the Port Macquarie News. To make sure you're up to date with all the news, SIGN UP HERE.